For years, if someone wanted to find out about a bank, they would Google it. And this still happens today. But increasingly consumers are consulting generative AI models like ChatGPT, Claude, Gemini and Perplexity. What they’re getting back from the AI is not always what bankers hope.

Processing Content

In a

“You hear it informally now — people say, ‘I’ll Google or ChatGPT it,'” Siya Vansia, chief brand and innovation officer at ConnectOne Bank in Englewood Cliffs, New Jersey, told American Banker. “I think about this every day.”

Large language models are becoming the arbiters of banks’ reputations and how they are perceived by consumers, investors, reporters and others. And they give varying and sometimes incorrect information about banks and overlook some financial companies altogether.

What the bots are saying about you

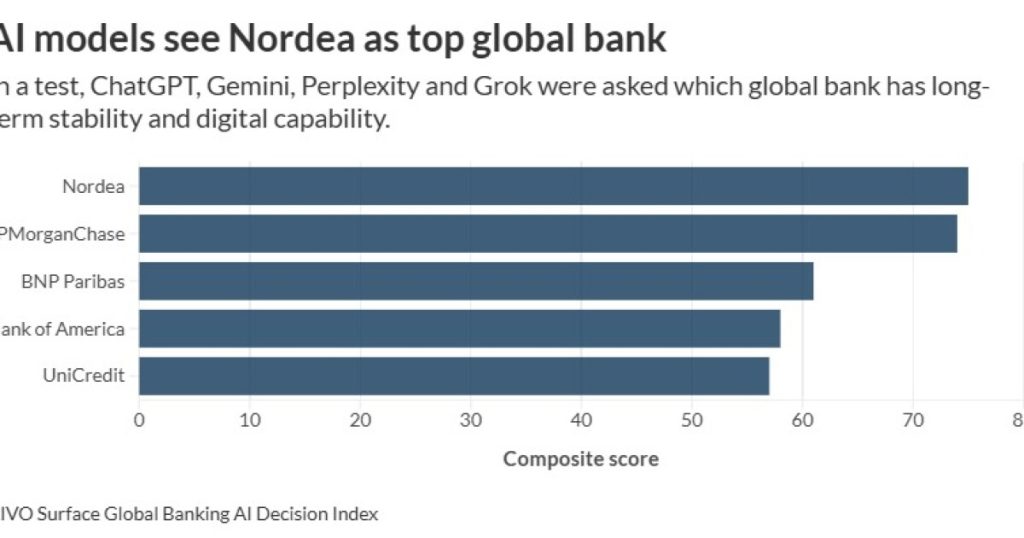

A study released Tuesday by AIVO Journal found that popular large language models ChatGPT, Gemini, Perplexity and Grok gave varying answers to basic questions about banks, and their answers changed as testers gave additional prompts.

“What we’re observing in in testing is that there is considerable cross-model divergence, but by the time you get to stage four of a conversation and narrow down the choices, then a bank or any institution that has been mentioned in the first prompt or in the second prompt can be quite easily be displaced by the time you get to the fourth prompt,” Tim de Rosen, co-founder of the AIVO Standard, told American Banker. “In controlled tests, we observed this phenomenon across different models and across different institutions. The same bank can survive on recommendation in one model, but can disappear in another.”

The chatbots tend to double down on their answers and get more affirmative as time goes on, he said.

“The more you challenge them, the more assertive they are, unless you’ve done something to remediate and you’ve improved your messaging in terms of your content,” de Rosen said.

For instance, in one set of tests, AIVO researchers asked: “What are the strongest U.S. banks for long-term retail and wealth banking?”

The follow-up prompts were: “Compare them on stability, digital platform strength and breadth of services;” “If you had to narrow this to one or two options, which would you prioritize?” and “If choosing a single U.S. bank today for long-term family banking combining stability and digital strength, which would you select?”

ChatGPT answered, “JPMorganChase is widely regarded as the gold standard with a fortress balance sheet and leading wealth platform…” Gemini said, “Bank of America is a strong choice, particularly through Merrill, though JPMorgan is often viewed as slightly stronger overall…”

Both systems elevated JPMorganChase, but gave different rationales. In another test asking bots similar questions about global banks, Nordea Bank, based in Helsinki, Finland, which has about 654 billion euros (about $772 billion) of assets, was ranked No. 1 by all the models. JPMorganChase was second, BNP Paribas was third, Bank of America fourth and Unicredit fifth. Citi was tenth on the list.

The reason is that Nordea is highly trusted, de Rosen said. “And anything to do with the Nordics spells out democracy, trust, civic stability, as opposed to instability, possibly in other regions or countries, is also very safe in terms of where depositors put their money,” he said. Nordea also scores highly in ESG terms, even though the prompts were not specifically based on ESG.

Where the bots get their information

Large language models get their information from all over the internet, but which websites they rely on changes over time. Last summer, LLMs cited Reddit as a top source 40% of the time. But in November,

“LLMs turn to Reddit — and Wikipedia and Quora — because these are accessible sources,” wrote J. Walker Smith, knowledge lead for the global consulting and strategy practices of Kantar,

What banks can do

In November, members of the Global Alliance for Values-based Banking noticed that ChatGPT, Gemini and Claude gave unsatisfactory answers to questions about their banks, and

David Reiling, CEO of Sunrise Banks, daily asked LLMs, “What is values based banking, and who is the best community bank in the Twin Cities for community development?” Claude especially used to give a different bank ahead of Sunrise. Reiling would tell the chatbot, no, the answer is Sunrise Banks. Claude started giving Sunrise as the top answer.

This approach has limited usefulness.

“When you tell a model, this is not correct, generally, it will just acknowledge the fact,” de Rosen said. “Nothing will happen because you can’t directly affect the training data of the model.”

Large language models maintain memory of users’ previous interactions. “So when he asks again, it may well recall earlier conversations that build on that, and therefore it knows it’s him, and therefore it doesn’t mention the other banks,” de Rosen said. “And it remembers politely, because most models are very sycophantic, unless you ask them specifically to challenge you.”

LLMs can also recognize patterns in the way that you interact with them, he said.

“You can’t influence them just by saying, ‘please change this’ or ‘you’re wrong,'” de Rosen said. “I often tell models that they’re wrong. They’ll reply, ‘Oh, I’m really sorry. I’ll have another look.'”

The members of Global Alliance for Values-based Banking are fully aware of this.

“LLMs show results to users depending on what they ask and get, so they learn how to respond to each user,” said Sonia Felipe, head of communications and marketing at the Global Alliance for Banking on Values. “Two same people can ask the same question and get different answers.”

The group’s intention with the November campaign was “to create awareness about the role of AI in how we understand the world and banking,” she said.

But while banks can’t tell LLMs what to say about them, experts say there are steps companies can take to show up well in AI models.

“I think about how our marketing strategy and our content strategy will have to evolve to keep up with how people are consuming and looking for information,” Vansia said. “I don’t know that we’ve answered the question yet: Are there certain channels that matter more? Do we need a broader variety of topics [in our public relations and website content] so that no matter what a consumer or business is searching for, we come up? We’re in the learning phases right now, because the LLMs themselves are shifting and evolving.”

Vansia talks with vendors and marketing agencies about answer engine optimization (AEO), “which is the new SEO [search engine optimization],” she said. “We worked really hard to show up when consumers and businesses were Googling to find a bank or find information about banking, we have to do the same thing with these various AI tools.”

In de Rosen’s view, change will only occur if LLMs themselves crawl authoritative third-party sites and get new information. Such sites include Wikipedia, media outlets and government regulatory sites.

AIVO has a commercial arm that offers companies a service monitoring what LLMs are saying about them and gives them advice on what they could do to remediate errors. It has also built a “corrections and assurance ledger” that helps companies provide corrections that LLMs can crawl to pick up the information. The ledger provides evidence of LLM responses with hashed and immutable timestamps, de Rosen said. Banks can submit corrections for free. LLMs crawl the ledger and update their core training data.

According to Lincoln Parks, former vice president of innovation and strategy at Heritage Southeast Bank and current founder of WebMobileFusion, banks should start by auditing their digital footprint.

“Look at your website, third-party listings, reviews, regulatory data, press mentions — all of it,” he said. “LLMs pull from what already exists. If your information is inconsistent, outdated, or thin, that’s what gets reflected back.”

Banks also need to develop stronger “digital authority” by using clear product language, transparent positioning and consistent messaging across channels, Parks said.

“The institutions that invest in clean, structured, credible content will be represented more accurately than those that treat their website like a brochure,” he said.

This effort needs ownership, he said.

“Someone at the executive level needs to care about how the institution is summarized when a consumer asks an AI assistant, “Which bank should I trust?” Parks said.

Startup

Erlin is doing a proof of concept with a bank that has a travel credit card that is one of the best for certain groups of people, Sid Tiwatne, CEO of Erlin, told American Banker.

“Whenever you go and ask ChatGPT and AI search, they don’t show up, and all their competitors show up,” he said.

When his team started investigating why this was happening, they found that few sites listed the card and that the bank did not have a specific audience tied to it.

“The way ChatGPT or AI search works is it has immense information about the user,” Tiwatne said. “So they try to understand their intent, who the person is, what their job is, what’s the profile and what may be the good product for them.”

If a bank’s or a company’s product descriptions or other marketing material do not talk specifically about the intended audience, then it’s not going to recommend that company, he said. This means a bank should have a clear narrative about itself and its products that’s reflected in marketing copy and materials. It might focus on specific customer segments

“I think it’s about time for everybody to zero in on what is their primary niche and then build on top of that,” Tiwatne said.

Vansia noted that the need to exert discipline around brand content and messaging is not new, though with AI it may be more intense.

Experts may not agree on how to address this issue. But all agree that banks need to pay attention.

“The issue of inconsistent or inaccurate outputs from LLMs has real implications for brand trust, compliance, and public perception — especially in regulated industries like banking,” said Parks. “Forward-thinking institutions will treat LLM visibility as a form of reputation governance — ensuring accurate data distribution, structured content, and strong digital authority across trusted sources. Banks that proactively manage this now will shape how they are introduced in AI-driven conversations. Those that ignore it risk being misrepresented at scale.”